BlawxBot

BlawxBot is a chatbot-style expert system that you can use in conjunction with any test you have defined on Blawx.

Controlling Access to BlawxBot

If your project is not published, only the owner of the project will be able to run BlawxBot from inside the test interface. If your project is set to published in the Rule Editor screen, all tests are available for use inside the Blawx interface, through BlawxBot, and over API.

So to allow users other than yourself to use BlawxBot with your encoding, you must set the Project to "Published".

Starting BlawxBot

To start BlawxBot, click on the "Bot" button in the test editor screen.

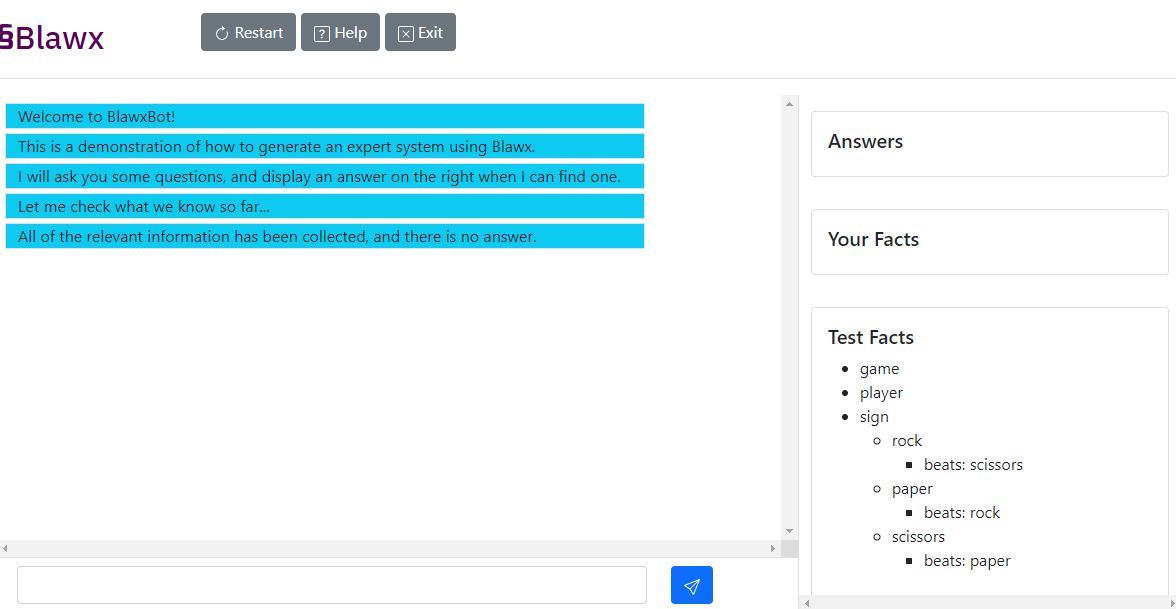

You will be taken to a user interface with a button bar across the top, a conversation window on the left, and a sidebar on the right.

Buttons

The buttons available in the BlawxBot interface are:

- Restart - restarts the expert system interview, forgetting all facts provided by the user in the conversation.

- Help - opens the Blawx documentation in a different window

- Exit - Closes BlawxBot and returns you to the test editor for the same test.

Sidebar

The sidebar on the right of the screen has three sections.

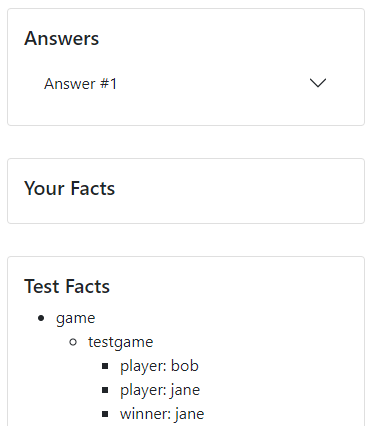

Answers

This section will display the first answer or answers that BlawxBot can find, with all the explanations for those answers, in a format similar to the output of the test editor.

Note that while pressing "Run" in the test environment takes all the facts provided and finds all valid answers and explanations for those facts, BlawxBot is always searching for valid answers on the basis of what it already knows. That means that if you enter the same facts in BlawxBot that were entered into a similar test, BlawxBot may not find all the answers that were found in the test, because it stops asking for inputs as soon as it finds at least one valid answer.

Test Facts

This section of the sidebar shows you the facts that BlawxBot already knows because they were defined in your test, or in the rule itself.

User Facts

This section of the sidebar shows you the facts that you have provided to BlawxBot so far in the interview.

Facts in the Test Facts and User Facts are displayed in a tree structure three layers

deep. The first layer is the names of categories defined in the code. The second layer

is the name of objects that have been declared to belong to that category. The third layer

is values that have been assigned to attributes of those objects, in the format

attribute: value.

Interview

In the interview window, text generated by BlawxBot is displayed on a blue background, and text that you type into the chat is displayed on a grey background.

How BlawxBot Asks Questions

BlawxBot is aware of the Blawx-specific ideas of Categories, objects, attributes, and values. It asks two kinds of questions: * Do you want to add data? * What data do you want to add?

Asking If You Want To Add Objects, and Values

BlawxBot will ask you whether you want to create additional objects, or to set

values for the attributes of objects that have already been created. If it asks

this sort of question, you must answer yes (note lower case) in order to add

an object or value. All other answers will be treated as a "no".

Asking for Objects and Values

If you say that you want to add an object, BlawxBot will ask you what object you want to add. If you say that you want to add a value, it will ask you what value you want to add. Your answer must be the text representation of that value that would appear in the "Code" section of the output section of the test editor.

For objects, whether creating one, or referring to one in an attribute, you must use only text with no spaces, and it must not start with a capital letter.

For numbers, you can enter the number and decimals with no separators.

For dates, you must use the format date(YYYY,M,D). For example, "January 3, 2001"

would be date(2001,1,3). Note that you do not use leading zeros for the month and day.

Similarly, durations must be in the format duration(S,Y,M,D). Here, S is either

1 for "into the future" or -1 for "into the past", and Y, M, and D represent the

number of years, months and days respectively.

How Does BlawxBot Decide What To Ask?

BlawxBot will ask you questions in the following order, if they are relevant, and if there are not any valid answers based on the facts it already has:

- BlawxBot goes through the relevant categories, one-by-one. The order of the categories is arbitrary.

- If there are objects in that category, it goes through each, one-by-one.

- For each, if there are relevant attributes, it goes through each, one-by-one.

- For each attribute, it asks you to add values to that attribute until you indicate there are no more values to add, then starts over at 1.

- If there are no objects in the category, or if all of the values have been collected for all the objects in the category, it asks if you would like to add another object, and starts over at 1.

- If there are no more relevant questions to ask, it says that it could not find an answer.

How Does BlawxBot Know What's Relevant?

At the start of the interview, and every time you change the information (either

by adding information, or indicating that there is no more

information of a certain type), BlawxBot accesses the /interview endpoint for

your test, and gets information from the reasoner about whether there is an answer,

and what additional questions are relevant.

If there is an answer, it stops the interview and displays the answer.

For information on how the endpoint calculates the relevance of questions, see the documentation about the test APIs.

Tips Using BlawxBot

Writing Tests for BlawxBot Expert Systems

If you have a test that defines some facts and poses a question and gives the expected answer when run in the test editor, that same test will work in BlawxBot, but BlawxBot will not ask any questions. That is because the facts in the test are known to BlawxBot too, and it is not necessary to ask any questions to find an answer.

If you want BlawxBot to ask for inputs, you need to create a tests which returns "no models" when you click "Run", so that BlawxBot will need to ask the user for input before finding an answer. Many tests written for use in BlawxBot will include only a question, and no facts.

How BlawxBot Deals with Existing Objects

Note that if there are objects in the rules or the test, BlawxBot will ask the user about attributes for those objects before asking whether any objects should be added to the category.

BlawxBot Will Sometimes ask the User to Answer the Question

It's not being lazy, we promise. BlawxBot is not (yet) aware which of the attributes represents the question encoded in the test. So depending on the question order, it may ask the user to provide a value for that attribute themselves. If they do, that will immediately end the interview, because BlawxBot now has the answer to the test question.

When using BlawxBot, if it asks whether you would like to add a value to the attribute that is being queried in the test, just answer "no".